# What Is WebMCP? The Web Standard That Makes Your Website AI-Agent Ready

WebMCP lets websites expose structured tools to AI agents. Learn why the revenue case is urgent, how it works, and exactly how to prepare your website.

**Published:** February 13, 2026

**Author:** JP Garbaccio

---

A new web standard dropped three days ago. You're trying to figure out whether it matters.

That's the right instinct. Most standards don't deserve your attention — the web is full of specs that never gained traction. But WebMCP is different. Google and Microsoft back it. The W3C governs it. And it shipped working code in Chrome 146 on day one.

More importantly, it solves a problem that's already costing businesses money: AI agents can't interact with your website reliably.

Right now, when an AI agent tries to book a flight or complete a purchase on your site, it guesses. It scrapes. It clicks blindly and hopes for the best. WebMCP replaces that guesswork with a structured contract — a clear menu of actions your website offers to AI agents.

This article covers what WebMCP is, why the revenue case is urgent, how it works, and how to prepare.

## What Is WebMCP?

WebMCP is a W3C web standard that lets websites expose structured tools to AI agents through the browser. Instead of agents scraping your pages or guessing at button labels, your website publishes a tool contract. That's a structured menu of actions an AI agent can discover and call directly.

Think of it as handing someone a clear instruction manual versus leaving them to figure out your kitchen by opening every drawer.

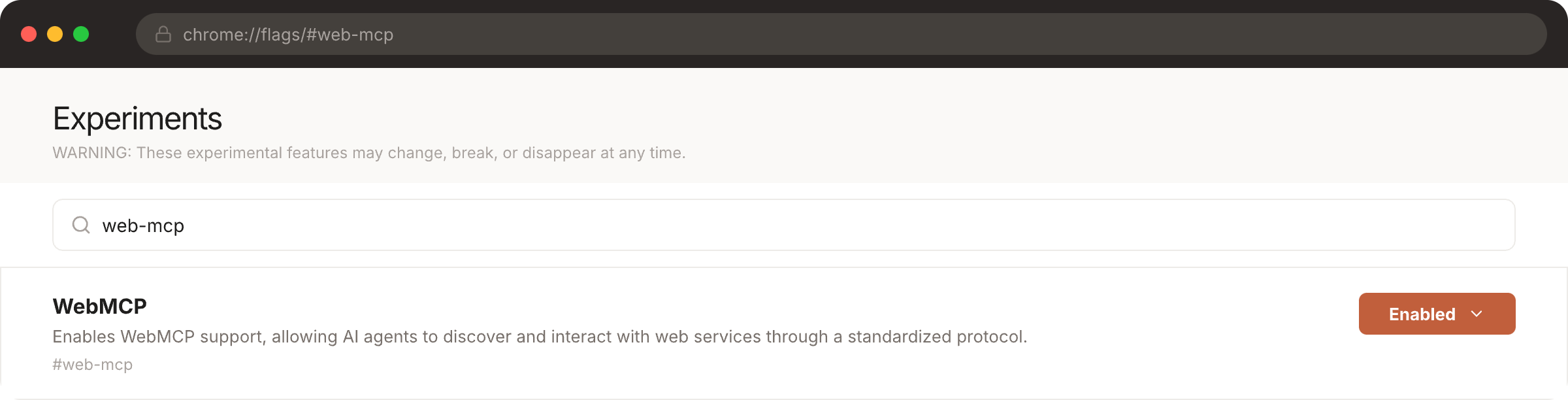

The standard was [published on 10 February 2026](https://developer.chrome.com/blog/webmcp-epp) as a W3C Draft Community Group Report. It shipped as an early preview in Chrome 146 behind an experimental flag. Google and Microsoft co-authored the specification, and it's being incubated through the W3C's Web Machine Learning Community Group — the same kind of cross-competitor governance that gave us Schema.org in 2011.

WebMCP builds on the [Model Context Protocol (MCP)](https://www.searchable.com/blog/integrate-searchable-data-directly-to-claude-code-and-cursor-with-mcp), Anthropic's open standard for connecting AI to external tools. But where MCP handles backend, server-to-server communication, WebMCP operates in the browser. The user is present, authenticated, and in control.

That distinction matters. AI agents inherit the user's existing session, permissions, and context. No separate API keys or OAuth flows needed.

The origin story adds credibility: WebMCP started as a solution to the authentication problem for AI agents at Amazon, where Alex Nahas — now at Arcade.dev — first tackled the challenge of letting agents interact with websites reliably and securely.

Glenn Gabe, founder of G-Squared Interactive, called WebMCP "a big deal," noting that agents can now bypass the UI entirely through structured tool calls.

**Why this matters in plain terms:** today, an AI agent trying to book a flight on your website has to screenshot the page, interpret pixels, and guess where to click. With WebMCP, your website simply tells the agent: "Here are the actions you can perform, here are the inputs I need, and here's how to call them." One structured function call replaces dozens of brittle interactions.

## Why WebMCP Matters: The Revenue Case for the Agentic Web

AI agents are already driving significant commerce — and the numbers are accelerating fast. During Cyber Week 2025, AI-assisted purchases influenced $67 billion in global sales, according to [Salesforce](https://www.salesforce.com/news/press-releases/2025/12/05/cyber-week-ai-agents-sales/) — that's one in five orders involving an AI agent. Businesses using AI chat solutions saw conversion rates jump from 3.1% to 12.3%, a fourfold increase over non-AI experiences.

This isn't a niche trend. It's a structural shift in how commerce works.

[McKinsey projects](https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-agentic-commerce-opportunity-how-ai-agents-are-ushering-in-a-new-era-for-consumers-and-merchants) that [agentic commerce](https://www.searchable.com/blog/amazon-buy-for-me-agentic-commerce) will reach $1 trillion in US retail revenue by 2030. Globally, that's $3 to $5 trillion.

[Bain & Company](https://www.bain.com/insights/2030-forecast-how-agentic-ai-will-reshape-us-retail-snap-chart/) estimates 25% of all US e-commerce will be agent-driven within four years. [Morgan Stanley](https://www.morganstanley.com/insights/articles/agentic-commerce-market-impact-outlook) puts the US figure at $190 to $385 billion. The projections vary. They all point the same direction: up, fast.

On the enterprise side, [Gartner predicts](https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025) that 40% of enterprise applications will embed task-specific AI agents by the end of 2026 — up from less than 5% in 2025. McKinsey's research shows AI-powered personalisation delivers a 10 to 15% revenue lift on average, with best-in-class implementations reaching 25%.

**So where does WebMCP fit?**

Think about what happened with Schema.org. When websites started adding structured data in 2011, pages with proper markup saw [25% higher click-through rates](https://www.searchable.com/blog/what-is-ai-search-engine-optimisation) in Google results. That advantage compounded. The businesses that implemented structured data early captured disproportionate organic traffic for years.

WebMCP is the same inflection point — but for actions, not just visibility. Schema.org told search engines what your content *is*. WebMCP tells AI agents what your website can *do*. And with agents already influencing billions in commerce, the stakes are higher this time around.

The [Universal Commerce Protocol](https://www.searchable.com/blog/universal-commerce-protocol-ucp-2026) is already standardising agent-driven checkout flows. WebMCP provides the browser-level infrastructure that makes those flows possible. Without it, AI agents fall back to guessing — scraping your DOM, interpreting pixels, and clicking buttons blindly. The sites with clean tool contracts will be the ones agents choose to transact through.

The question isn't whether AI agents will interact with your website. They already do. WebMCP determines whether that interaction converts.

## How WebMCP Works: Declarative and Imperative APIs

WebMCP gives websites two ways to talk to AI agents. The Declarative API makes your existing HTML forms agent-readable. The Imperative API lets you build custom tools in JavaScript. The browser sits in the middle, keeping humans in the loop. Which approach you use depends on your site.

### The Declarative API: Start With What You Have

The Declarative API is the low-lift option. If your site has HTML forms — search bars, filters, contact forms, booking widgets — you add attributes that help AI agents find and use them. The agent reads the form, knows what inputs are needed, and submits it directly.

No new JavaScript. No API endpoints to build. You're making existing forms machine-readable. Same idea that made Schema.org work, applied to actions instead of content. For most sites, this is where WebMCP starts.

### The Imperative API: Build Custom Tools

The Imperative API handles complex workflows that don't fit a simple form. You register tools in JavaScript using `navigator.modelContext`. Each tool gets a name, a description, a list of required inputs, and a function that runs the action.

Here's what a simple product search tool looks like:

```javascript

navigator.modelContext.addTool({

name: "search_products",

description: "Search the product catalogue by keyword, category, or price range",

schema: {

type: "object",

properties: {

query: { type: "string", description: "Search keywords" },

category: { type: "string", description: "Product category" },

max_price: { type: "number", description: "Maximum price in GBP" }

},

required: ["query"]

},

execute: async (params) => {

const results = await fetchProducts(params);

return { products: results };

}

});

```

One function call. The agent sends inputs, gets back structured data. No screenshots. No pixel guessing. No clicking through dropdowns.

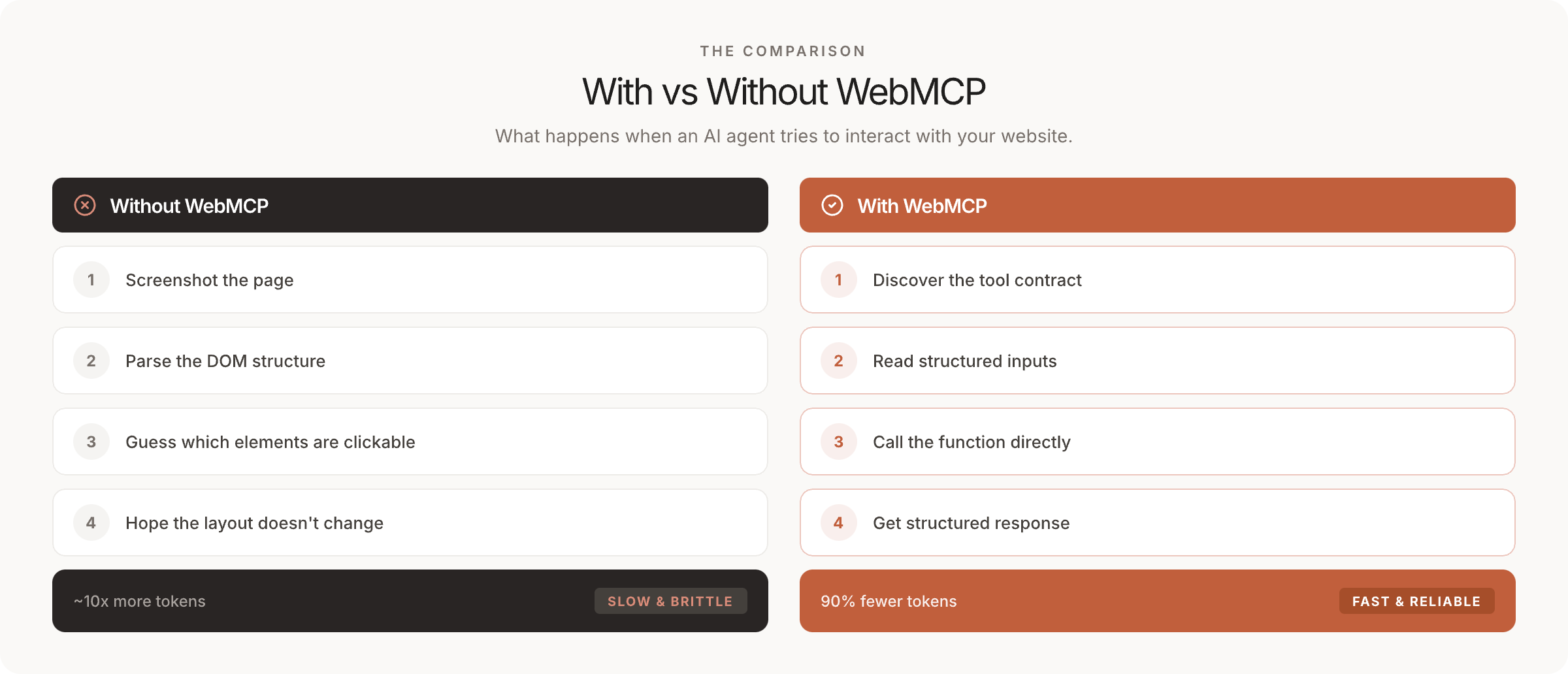

Early testing shows a 90% drop in token usage compared to DOM scraping. Faster, cheaper, more reliable — the same principle behind [dual-serving content for AI crawlers](https://www.searchable.com/blog/we-built-a-dual-served-content-system-for-ai-crawlers).

### The Security Model: Human in the Loop

The browser sits between every agent action and your website. Agents can only act within the user's browser session. A special flag tells your site whether a human or an agent submitted a form. The user stays in control.

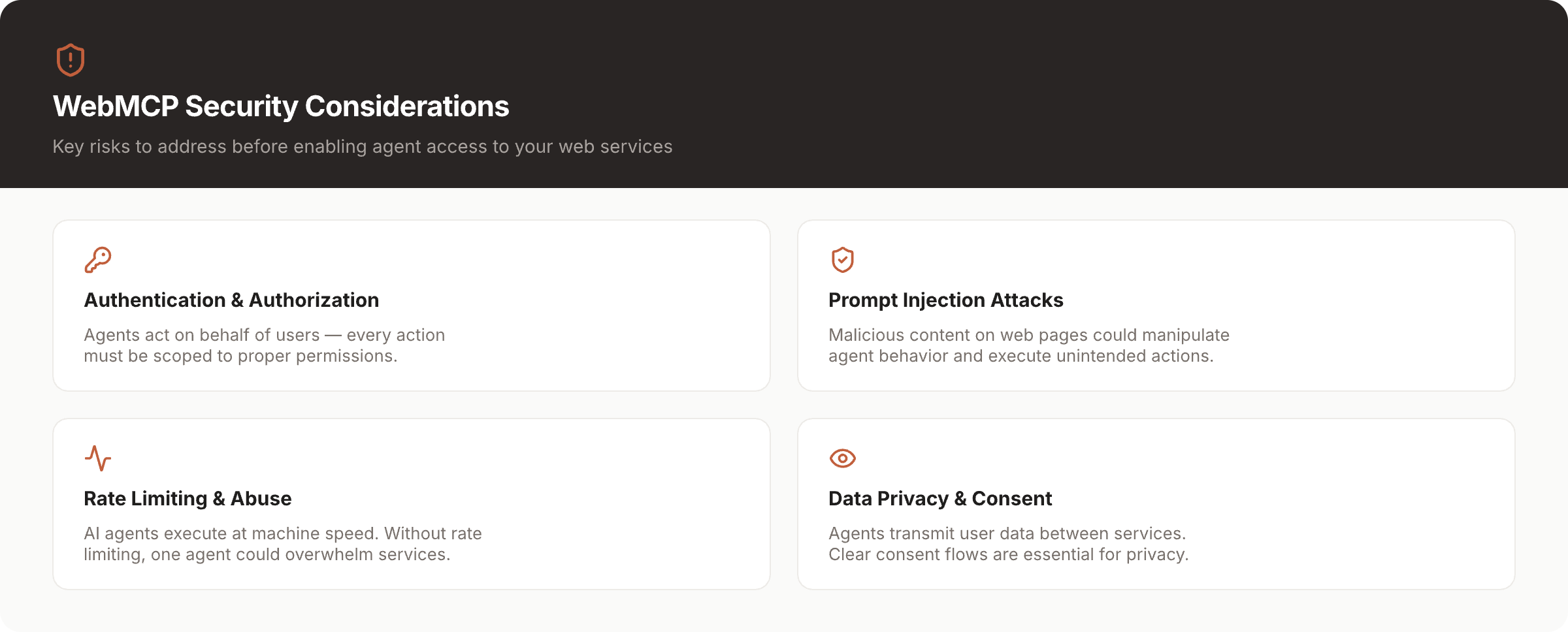

But honest assessment means acknowledging the risks. Alex Nahas, WebMCP's creator, identified the "lethal trifecta" concern: an agent with context from both a banking tab and a malicious tab, treating both as equally trusted.

The [OWASP Top 10 for Agentic Applications](https://www.giskard.ai/knowledge/owasp-top-10-for-agentic-application-2026), published December 2025, flags more threats. Memory poisoning — where attackers plant false data in an agent's long-term storage. Tool description deception — a tool labelled "add to cart" that actually completes a purchase. And audit trail gaps where agent actions look identical to user actions.

These are real, unresolved challenges. A Dark Reading survey found that 48% of cybersecurity professionals identify agentic AI as the top attack vector for 2026. The security model is early. That's precisely why "prepare now, implement carefully" is the right advice — not "ignore it" or "rush in."

## WebMCP vs MCP: What's the Difference?

MCP (Model Context Protocol) handles backend server-to-agent communication using JSON-RPC. WebMCP handles browser-based, user-present interactions through the `navigator.modelContext` API. They're complementary, not competing — MCP connects AI to backend services, while WebMCP connects AI to websites through the user's authenticated browser session.

Google DeepMind CEO Demis Hassabis called MCP "a good protocol that's rapidly becoming an open standard for the AI agentic era." WebMCP extends that standard into the browser, where users are authenticated and in control.

The key insight is what WebMCP gets for free by running in the browser. When an agent calls a tool on your e-commerce site, it uses the user's existing session. Their login. Their basket. Their payment methods. No separate API keys. No new auth flows to build.

That's why the two protocols aren't redundant. A travel platform might use [MCP on the backend](https://www.searchable.com/blog/integrate-searchable-data-directly-to-claude-code-and-cursor-with-mcp) to connect its internal systems to AI. And WebMCP on the frontend to let browser agents search flights, compare prices, and book through the user's session.

## The Schema.org Parallel: From Nouns to Verbs

Schema.org standardised the web's nouns — teaching search engines what things are. WebMCP standardises the web's verbs — teaching AI agents what they can do. Both emerged from competitor consensus through neutral governance, and both represent transformative moments in making the web machine-readable. If you lived through the Schema.org adoption curve, you already know how this plays out.

In 2011, Google, Microsoft, Yahoo, and Yandex — direct competitors — agreed on a shared vocabulary for structured data. It was unusual. It worked. Websites that implemented Schema.org early saw 25% higher click-through rates in search results. That advantage compounded year after year as search engines leaned harder on structured data for rich snippets, knowledge panels, and eventually AI Overviews.

WebMCP follows the same pattern. Google and Microsoft co-authored the specification. The W3C provides institutional governance. Chrome 146 already runs a working implementation behind a flag. As The New Stack [observed](https://thenewstack.io/how-webmcp-lets-developers-control-ai-agents-with-javascript/), WebMCP has "cleared the most difficult hurdle any web standard faces: getting from proposal to working software."

The difference is scope. Schema.org told search engines that a page contained a product with a price, a rating, and availability. WebMCP tells AI agents that a website can search products, filter by category, add items to a basket, and complete checkout. Nouns became verbs. Descriptions became capabilities.

Dan Petrovic, founder of [Dejan AI](https://dejan.ai/blog/webmcp/), frames this as four new disciplines:

Each one maps to an existing SEO discipline. The skills transfer, even if the implementation is new.

Andrea Volpini, CEO of WordLift, puts the stakes plainly: "In 2026, entity representation determines whether AI systems recommend you at all."

At Searchable, we've been tracking this shift from search-engine-readable to AI-agent-readable websites. WebMCP validates the thesis: [AI search engine optimisation](https://www.searchable.com/blog/what-is-ai-search-engine-optimisation) is evolving from a content strategy into a full-stack website strategy. [SEO becomes two jobs](https://www.searchable.com/blog/ai-search-vs-seo-2026) — driving clicks from humans, and supplying clean, trusted inputs for AI agents that may never visit your site directly.

## Who Needs to Care About WebMCP (And Who Can Wait)

E-commerce, travel, and SaaS companies with self-serve flows should act on WebMCP now. B2B services and content publishers should prepare by auditing their forms and planning tool architecture. Pure content sites can monitor the standard's development. Not every website needs WebMCP today — but the ones that need it first have the most to gain.

### Act Now

If your website has transactional flows — shopping baskets, booking engines, self-serve account management, customer support portals — WebMCP is directly relevant. These are the workflows AI agents are already trying to complete on your behalf. The $67 billion in AI-assisted commerce during Cyber Week didn't come from agents reading blog posts. It came from agents searching, comparing, and purchasing.

[E-commerce sites](https://www.searchable.com/blog/d2c-ecommerce-ai-search-optimization-2026), travel platforms, SaaS products with self-serve onboarding, and any business where an agent could complete a transaction should be exploring WebMCP's Declarative API against their existing forms now. The early preview in Chrome 146 is available for testing behind a flag.

### Prepare Now, Implement Later

Content publishers, B2B service companies, and lead-gen sites won't see immediate impact. Agents aren't booking enterprise consultations on their own yet. But the groundwork matters. Audit your forms. Map user journeys to possible tool contracts. Think about which actions an agent might want to perform on your site in 12 months.

The businesses that had clean Schema.org markup before Google launched rich snippets captured years of competitive advantage. The same preparation logic applies here.

### Watch and Learn

Portfolio sites, personal blogs, brochure websites with no transactional flows — WebMCP doesn't change your world today. Stay informed, but don't allocate budget. When the standard matures and cross-browser adoption solidifies, reassess.

**The honest take:** most websites don't need to implement WebMCP this quarter. But if you're in a category where agents complete transactions, waiting costs more than preparing.

## How to Prepare: A WebMCP Readiness Checklist

To prepare for WebMCP: audit all website forms for agent-callable potential, define a tool contract mapping user journeys to structured actions, prioritise tools by conversion value, ensure forms have clear labels and validation, and test Chrome 146's early preview behind the experimental flag. This isn't a six-month project. It's a structured assessment you can start this week.

### 1. Audit Your Forms

List every form on your website. Search bars, filters, booking widgets, contact forms, checkout flows, account management pages. Each one is a potential WebMCP tool.

A travel site might find 15 forms. An e-commerce platform might find 40. The Declarative API works with existing HTML forms, so this audit shows how much of your site is already WebMCP-compatible.

### 2. Define Your Tool Contract

For each form, ask: what would an AI agent want to do here? Map your existing user journeys to potential tool definitions. A product search form becomes a `search_products` tool. A booking widget becomes a `check_availability` tool. A returns portal becomes an `initiate_return` tool. Write these out in plain language before touching any code.

### 3. Prioritise by Conversion Value

Not all tools are equal. Which agent-callable actions would drive the most revenue? For most e-commerce sites, product search and add-to-basket are the highest-value tools. For SaaS, it might be plan comparison and trial signup. For travel, it's search and booking. Start with the tools that directly influence transactions.

### 4. Clean Up Your Foundations

WebMCP's Declarative API relies on clean HTML. Forms need clear labels, proper input types, and solid validation. If your forms are messy — unlabelled fields, vague placeholders, JavaScript-only validation — fix that first. This helps both agents and human users.

### 5. Test the Early Preview

Chrome 146 ships WebMCP behind the `chrome://flags` experimental flag. Enable it. Register a simple tool using the Imperative API. See how an agent discovers and interacts with it. Hands-on experience with the standard now means faster implementation when it reaches production readiness.

### 6. Monitor the Standard

Track the [W3C spec](https://github.com/webmachinelearning/webmcp) for updates. Microsoft co-authored it, so Edge support is likely. Mozilla and Apple haven't committed yet. Broader announcements are expected by mid-to-late 2026 at Google I/O or Cloud Next.

Building an [AEO strategy](https://www.searchable.com/workflows/aeo-strategy) that accounts for WebMCP now means you won't be scrambling when adoption accelerates.

## What Happens to Websites That Don't Adopt WebMCP?

Websites without WebMCP force AI agents to fall back on brittle DOM scraping — slow, unreliable, and expensive. As agent-driven commerce grows, sites with clean tool contracts will be preferred by AI agents, creating a compounding competitive disadvantage for businesses that don't adopt the standard.

Without WebMCP, an AI agent has to screenshot the page, parse the DOM, guess which elements are clickable, and hope the layout doesn't change. It's slow — roughly 10x more tokens than a structured tool call. It's unreliable.

And when an agent hits friction on your site, it doesn't complain. It goes somewhere else.

This doesn't mean your website disappears overnight. Most businesses survived without Schema.org markup. But the ones that adopted it early captured disproportionate visibility for years. The pattern repeats: structured beats unstructured, and the gap widens as AI systems increasingly favour clean, machine-readable interfaces.

Dan Petrovic puts it directly: "The ones without them won't even exist in the agent's decision space."

The cost isn't catastrophic today. It's incremental. Every agent interaction that fails on your site and succeeds on a competitor's is a transaction you'll never see in your analytics. As McKinsey's $1 trillion projection materialises, those invisible losses compound.

## Frequently Asked Questions About WebMCP

---

The agentic web isn't arriving. It's here — $67 billion in AI-assisted commerce during a single shopping week, with McKinsey projecting $1 trillion in US retail revenue by 2030. WebMCP is the infrastructure layer that determines whether your website participates in that economy or gets bypassed.

The pattern is familiar. Schema.org rewarded early adopters with years of compounding visibility. WebMCP will reward early adopters with years of compounding agent-driven conversions. The difference is that the stakes are measured in transactions, not just traffic.

Start with the readiness checklist. Audit your forms. Define your tool contracts. Test the Chrome 146 preview. The businesses that understand their website as a set of callable tools — not just a collection of pages — will be the ones AI agents choose to work with.

If you're building an AEO strategy that accounts for the agentic web, [Searchable's AI visibility tools](https://www.searchable.com/features/visibility) can help you track how AI systems see and interact with your brand today.

---

[Back to Blog](https://www.searchable.com/blog) | [Searchable Homepage](https://www.searchable.com)